The Benchmark

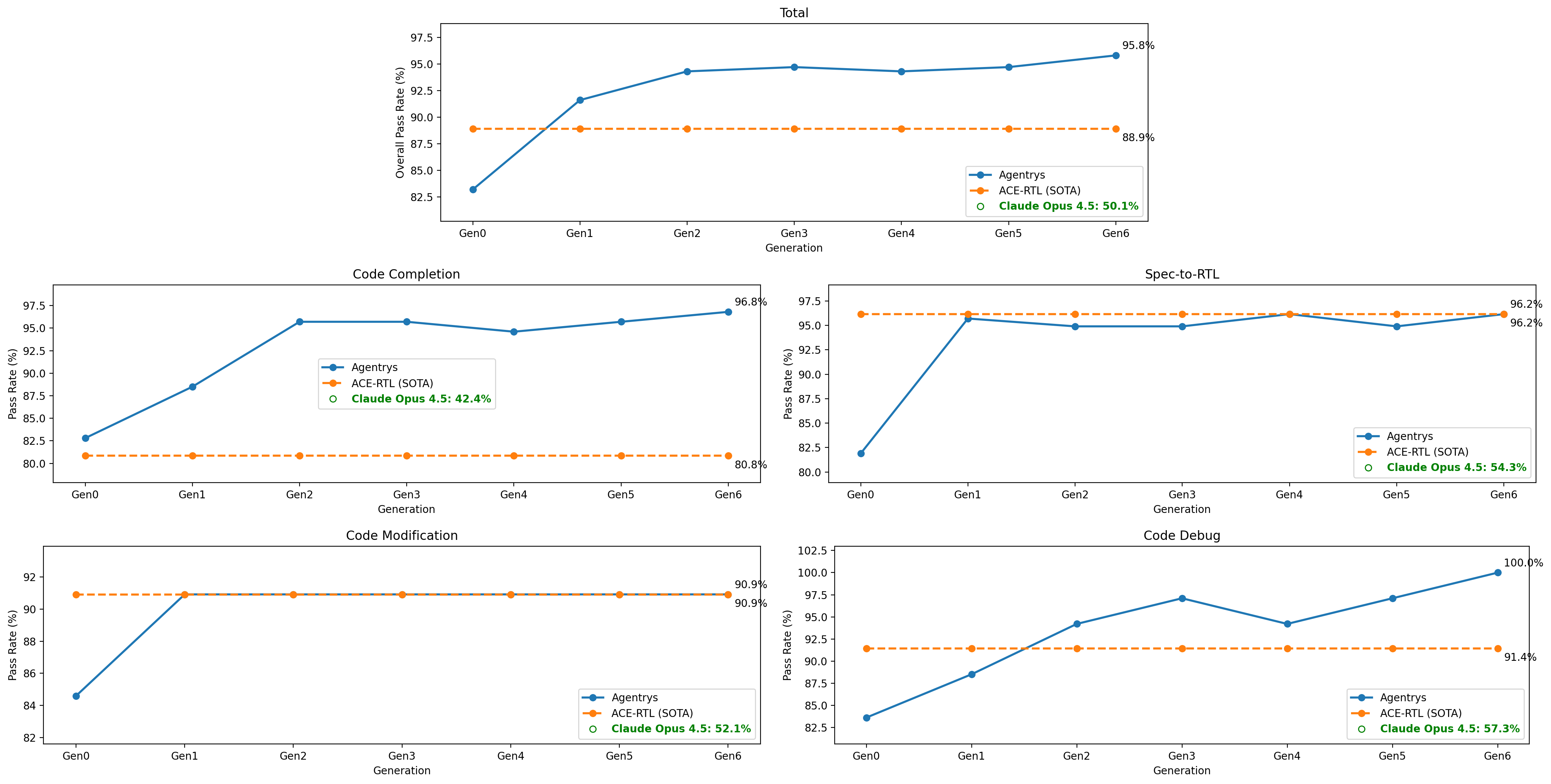

The Comprehensive Verilog Design Problems (CVDP) benchmark is the field's most rigorous evaluation of RTL automation. It tests systems across four task categories — Code Completion, Spec-to-RTL, Code Modification, and Code Debug — spanning a wide range of problem difficulty. We evaluate against ACE-RTL, the current state-of-the-art specialized system, across seven generations of agent development (Gen 0 through Gen 6).

Results

Agentrys reaches 95.8% overall pass rate at Gen 6 — surpassing ACE-RTL's 88.9% by 6.9 points. On Code Debug, the system achieves a perfect 100% pass rate. Performance improves almost monotonically across generations, validating the self-improving loop at the core of the ADA framework.

| Task | Agentrys (Gen 6) | ACE-RTL (SOTA) | Claude Opus 4.5 |

|---|---|---|---|

| Overall | 95.8% | 88.9% | 50.1% |

| Code Completion | 96.8% | 80.8% | 42.4% |

| Spec-to-RTL | 96.2% | 96.2% | 54.3% |

| Code Modification | 90.9% | 90.9% | 52.1% |

| Code Debug | 100.0% | 91.4% | 57.3% |

The sharpest gains appear on tasks that demand multi-step reasoning and tool use. On Code Completion, Agentrys leads ACE-RTL by 16 points; on Code Debug, it reaches a perfect score — 8.6 points ahead of SOTA — where agentic iteration over simulation feedback provides the clearest advantage over static, non-improving baselines.

Agent Architecture Evolution

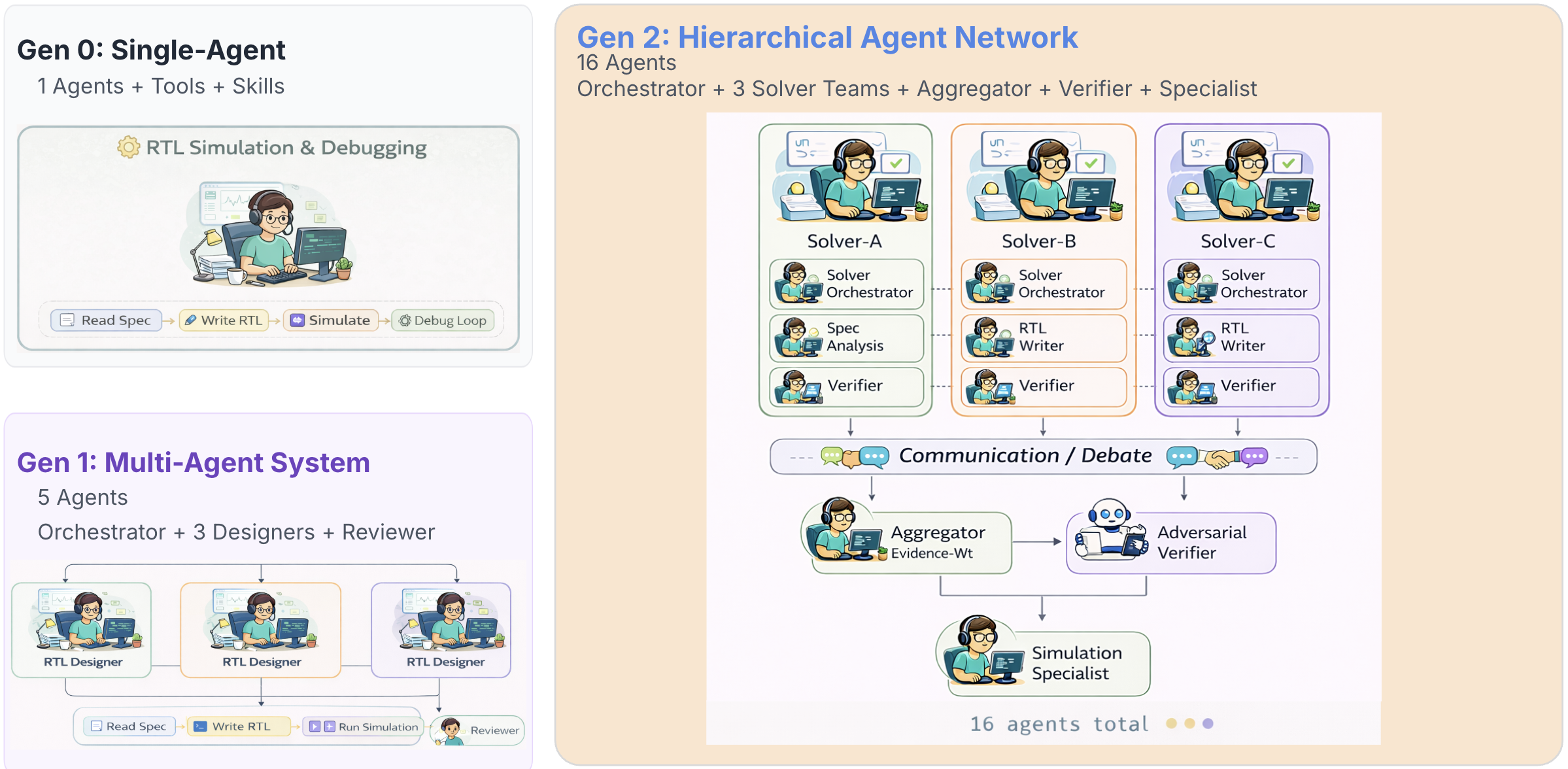

The gains across generations are driven by a systematic evolution of all aspects in agent design, including architecture — from a single-agent system at Gen 0 to a 16-agent hierarchical network at Gen 2. Each generation introduces new coordination mechanisms that increase both the breadth of solution exploration and the rigor of verification.

The Gen 2 hierarchical network adds a communication and debate layer: after each solver team independently produces a candidate solution, an evidence-weighted aggregator reconciles the outputs and an adversarial verifier stress-tests the result before a simulation specialist validates it. This pipeline reduces both false positives and convergence failures. Each generation is itself a product of the self-improving loop — built from what the previous generation learned running real design workflows.

References

- CVDP Benchmark Comprehensive Verilog Design Problems — arxiv.org/abs/2506.14074

- ACE-RTL When Agentic Context Evolution Meets RTL-Specialized LLMs — arxiv.org/abs/2602.10218

Cite this work

@misc{agentrys2026cvdp,

title = {Agentrys: Self-improving Agent Solving CVDP RTL Coding Tasks},

author = {Tsai, Yun-Da and Ding, Duo and Li, Wuxi and Ren, Haoxing},

year = {2026},

month = {February},

url = {https://agentrys.ai/blog-cvdp.html}

}